Epileptic Disorders

MENUMeasuring expertise in identifying interictal epileptiform discharges Volume 24, issue 3, June 2022

- Key words: interictal epileptiform discharge, EEG, epilepsy, assessment, expert and non-expert

- DOI : 10.1684/epd.2021.1409

- Page(s) : 496-506

- Published in: 2022

Objective. Interictal epileptiform discharges on EEG are integral to diagnosing epilepsy. However, EEGs are interpreted by readers with and without specialty training, and there is no accepted method to assess skill in interpretation. We aimed to develop a test to quantify IED recognition skills.

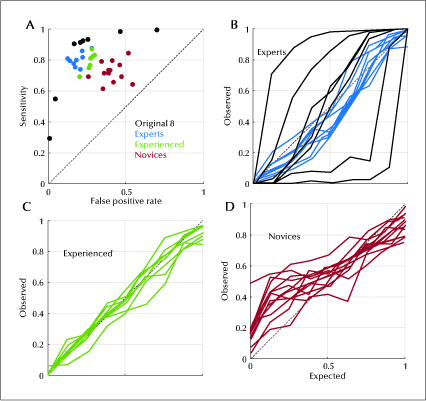

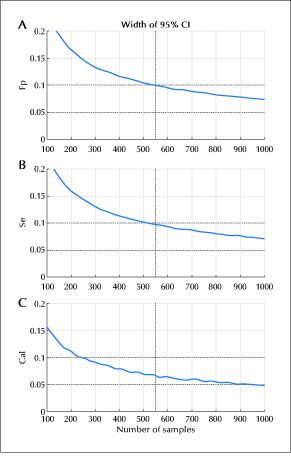

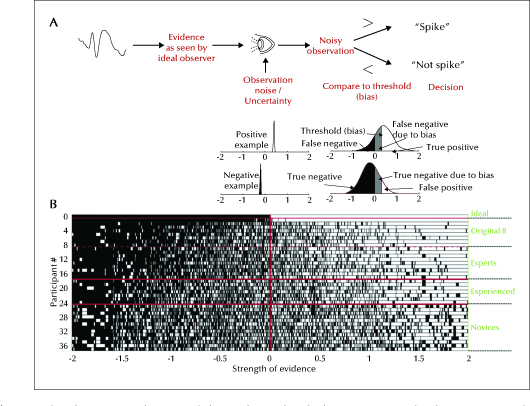

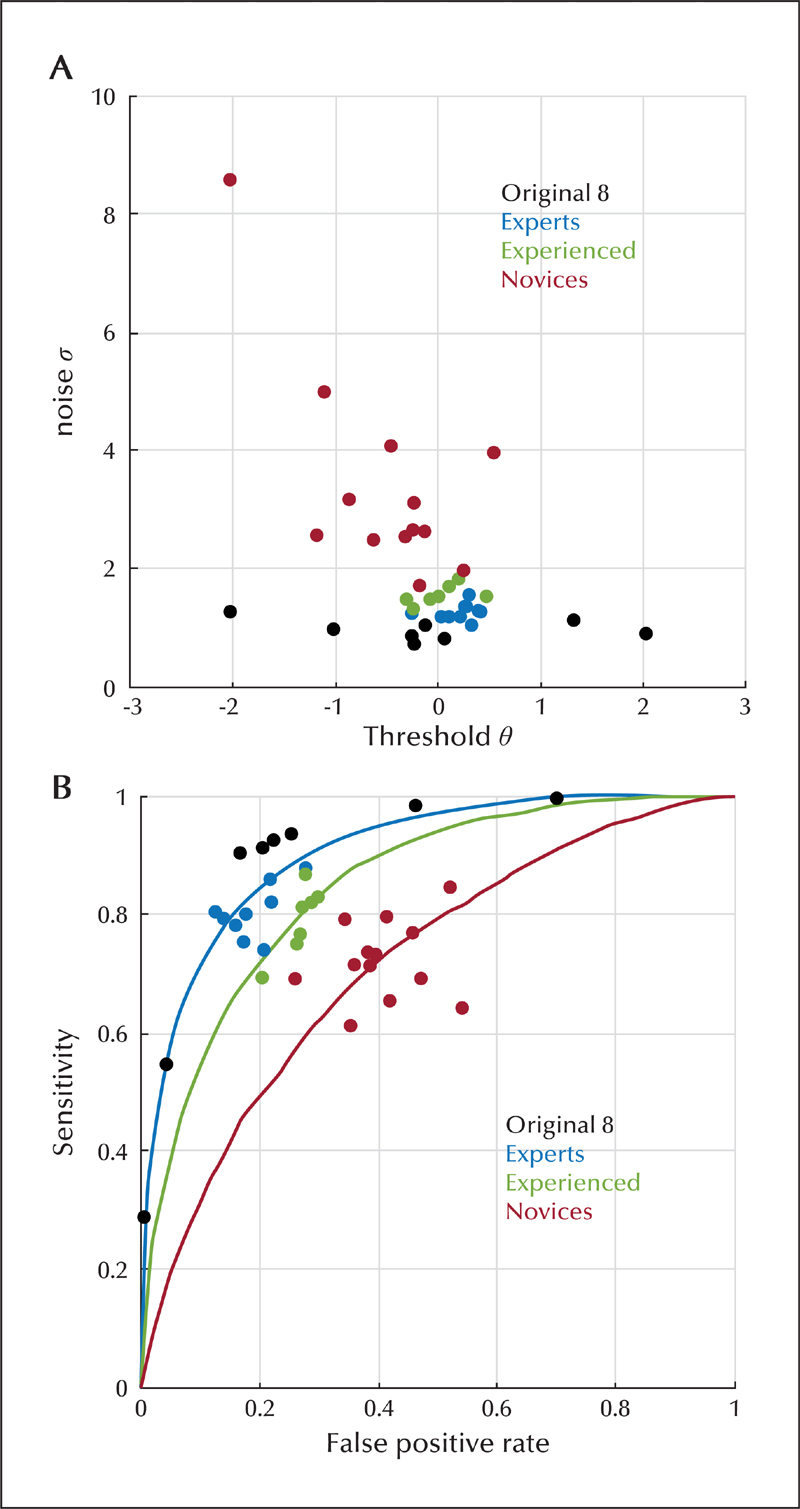

Methods. A total of 13,262 candidate IEDs were selected from EEGs and scored by eight fellowship-trained reviewers to establish a gold standard. An online test was developed to assess how well readers with different training levels could distinguish candidate waveforms. Sensitivity, false positive rate and calibration were calculated for each reader. A simple mathematical model was developed to estimate each reader’s skill and threshold in identifying an IED, and to develop receiver operating characteristics curves for each reader. We investigated the number of IEDs needed to measure skill level with acceptable precision.

Results. Twenty-nine raters completed the test; nine experts, seven experienced non-experts and thirteen novices. Median calibration errors for experts, experienced non-experts and novices were -0.056, 0.012, 0.046; median sensitivities were 0.800, 0.811, 0.715; and median false positive rates were 0.177, 0.272, 0.396, respectively. The number of test questions needed to measure those scores was 549. Our analysis identified that novices had a higher noise level (uncertainty) compared to experienced non-experts and experts. Using calculated noise and threshold levels, receiver operating curves were created, showing increasing median area under the curve from novices (0.735), to experienced non-experts (0.852) and experts (0.891).

Significance. Expert and non-expert readers can be distinguished based on ability to identify IEDs. This type of assessment could also be used to identify and correct differences in thresholds in identifying IEDs.